|

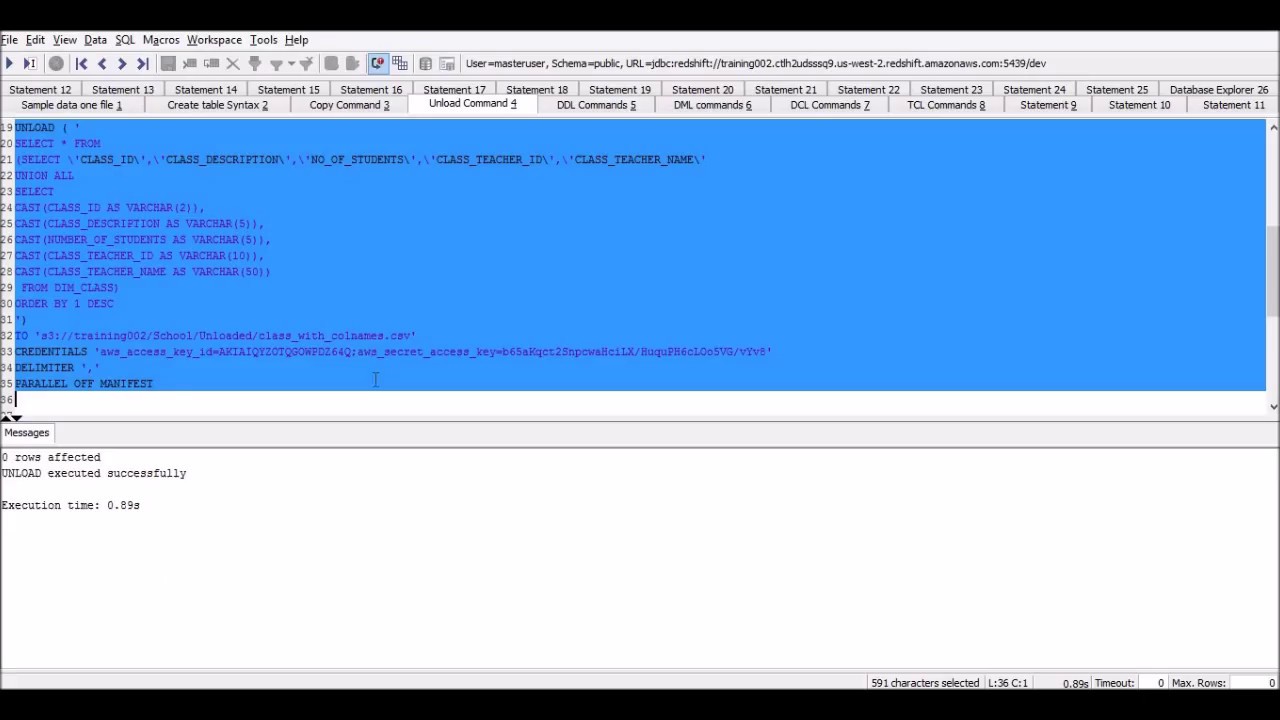

If not, you may get an error similar to this: ERROR: S3ServiceException:The bucket you are attempting to access must be addressed using the specified endpoint. copy one_column ("number")ĬREDENTIALS 'aws_access_key_id=XXXXXXXXXX aws_secret_access_key=XXXXXXXXXXX' Below is an example of a COPY command with these options set:įor a regular COPY command to work without any special options, the S3 bucket needs to be in the same region as the Redshift cluster. The solution is to adjust the COPY command parameters to add “COMPUPDATE OFF” and “STATUPDATE OFF”, which will disable these features during upsert operations. For example, they may saturate the number of slots in a WLM queue, thus causing all other queries to have wait times. In the below example, a single COPY command generates 18 “analyze compression” commands and a single “copy analyze” command:Įxtra queries can create performance issues for other queries running on Amazon Redshift. Even if the COPY command determines that a better encoding style exists, it’s impossible to modify the table’s encoding without a deep copy operation. In Redshift, the data encoding of an existing table cannot be changed.

Performing a COPY when the table already has data in it.Performing a COPY into a temporary table (i.e.In the following cases, however, the extra queries are useless and should be eliminated: Redshift runs these commands to determine the correct encoding for the data being copied, which may be useful when a table is empty. Improving Redshift COPY Performance: Eliminating Unnecessary Queriesīy default, the Redshift COPY command automatically runs two commands as part of the COPY transaction:

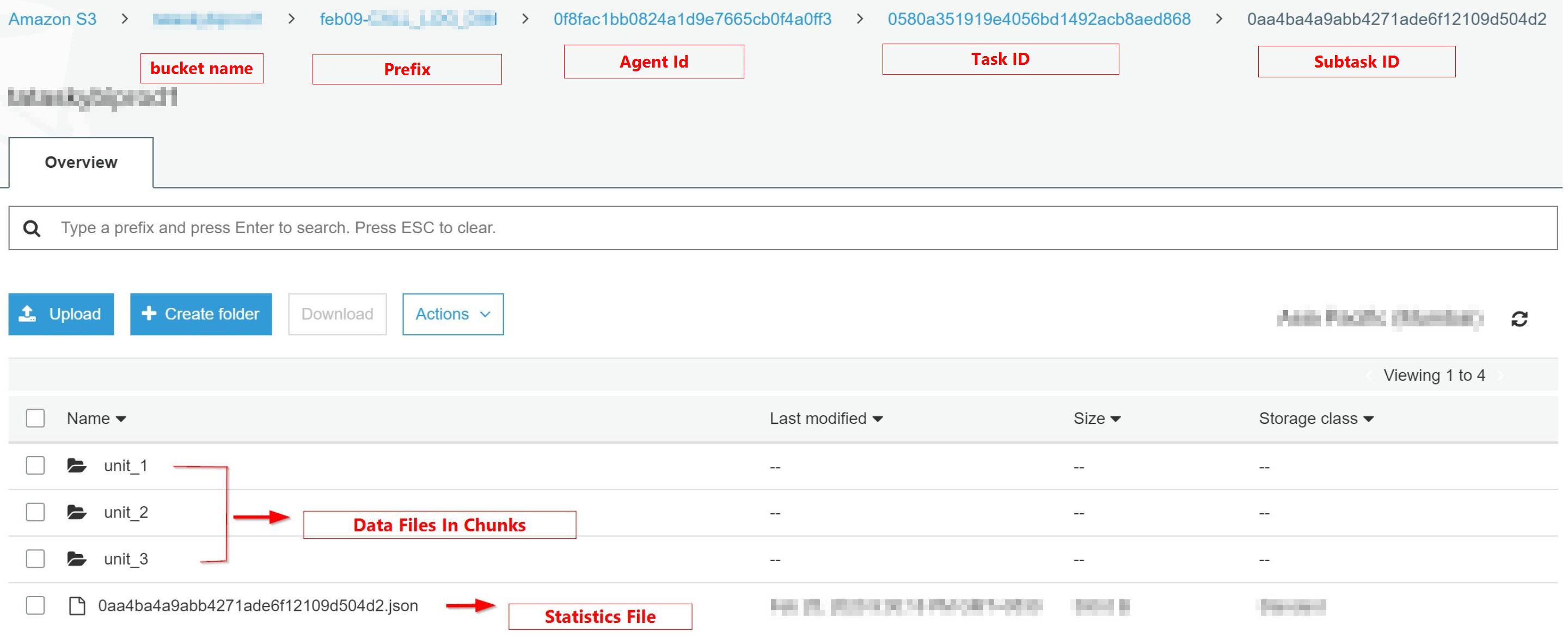

Fortunately, the error can easily be avoided, though, by adding an extra parameter. The error message given is not exactly the clearest, and it may be very confusing. This can easily happen when an S3 bucket is created in a region different from the region your Redshift cluster is in. Some people may have trouble trying to copy their data from their own S3 buckets to a Redshift cluster. If you have any questions, let us know in the comments! How to load data from different s3 regions You can learn more about the exact usage here. If it is not, you need to let it know by using the FORMAT AS parameter. If the source file doesn’t naturally line up with the table’s columns, you can specify the column order by including a column list in your COPY command, like so: copy catdemo (column1, column2, etc.)ĪWS assumes that your source is a UTF-8, pipe delimited text file. Here’s a simple example that copies data from a text file in s3 to a table in Redshift: copy catdemoįrom 's3://awssampledbuswest2/tickit/category_pipe.txt' If the source file doesn’t naturally line up with the table’s columns, you can specify the column order by including a column list. Authorization to access your data source (usually either an IAM role or the access ID and secret key of an IAM user).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed